Try It

The best way to understand the Playground is to open one. Pick a model and start generating.

Nano Banana 2

Google’s fast image generation and editing

Veo 3.1

Google DeepMind’s latest video model with sound

ElevenLabs Music

High quality, realistic music generation

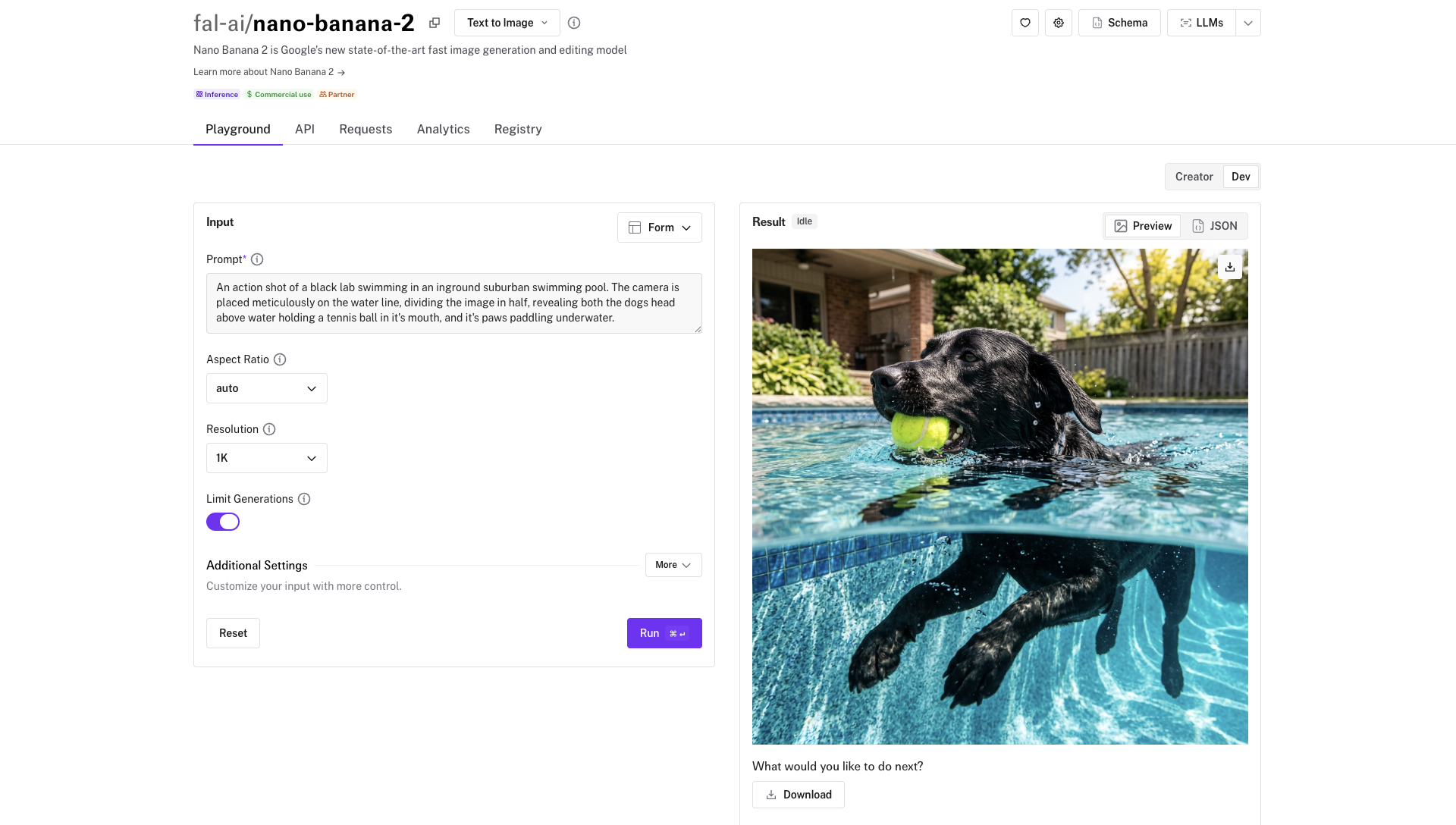

What the Playground Shows

Each model page on fal.ai (for example, Nano Banana 2) is organized into tabs. The Playground tab lets you fill in inputs and run the model directly in your browser. The API tab shows the full input and output schemas with type information, so you know exactly what fields are available and what the response looks like. The page also displays pricing, average latency, and ready-to-copy code examples.Testing a Model

Find a model

Browse the model gallery or search for a specific model. Click on it to open its page.

Fill in the inputs

The Playground form is auto-generated from the model’s input schema. Required fields are marked, and optional fields have sensible defaults. For models that accept images, video, or audio, you can upload files directly.

Run the model

Click Run to submit your request. The result appears below the form, typically within a few seconds.

Copying Code

Every Playground result includes generated code that reproduces the exact request you just ran. Click the code tab to see examples in Python, JavaScript, and cURL, then copy them directly into your project.Your Own Apps

When you runfal deploy, the output includes a Playground URL for your app. This means anyone with access can test your endpoints through the same interface that powers the model gallery.

image_url suffix renders it as an image upload widget, and wrapping a field in Hidden() keeps it accessible via API but hides it from the Playground form.

Playground vs Sandbox

The Playground and the Sandbox serve different purposes. The Playground is for testing a single model with specific inputs and copying code. The Sandbox is for comparing multiple models at once, with features like model sets, cost estimates, search across past generations, and shareable links.| Playground | Sandbox | |

|---|---|---|

| Purpose | Test one model, copy code | Compare multiple models |

| Where | Each model’s page | fal.ai/sandbox |

| Your own apps | Yes, after fal deploy | Yes, can be added manually |

| Code generation | Python, JS, cURL | Not available |

| Sharing | Not available | Shareable links with previews |